How AI-Generated Content Can Get You into Trouble & Why You Should Avoid AI as a Student – 2026 Guide

- I. Introduction

- II. The Technical Risks: Detection and False Positives

- III. The Academic & Professional Consequences

- IV. The “Hidden” Legal and Ethical Troubles

- V. Why Human Writing Wins (The Value of the Struggle)

- VI. The “Safe” Way to Use AI in 2026

- VII. Conclusion

- Frequently Asked Questions (FAQ)

I. Introduction

Artificial intelligence has become impossible to ignore in 2026. From search engines that summarize entire articles to classroom tools that help students brainstorm ideas, AI is now embedded in nearly every stage of the learning process. Many students encounter AI-powered writing assistants daily, and the promise of quickly generating essays, reports, or discussion posts can be incredibly tempting. For a busy student juggling coursework, part-time jobs, and personal responsibilities, the idea of an “easy A” produced in seconds can feel like the perfect solution.

However, the reality of modern education tells a very different story. Universities, colleges, and even high schools have rapidly adapted to the rise of generative AI. What once existed as a technological gray area is now a clearly regulated aspect of academic integrity policies. Institutions have introduced strict rules regarding the use of AI-generated content, often requiring students to disclose any AI assistance or prohibiting it entirely in graded assignments. As a result, submitting AI-generated academic work is no longer viewed as an experimental shortcut—it is increasingly treated as a direct violation of academic integrity.

While artificial intelligence can be a powerful research companion—helping students locate information, summarize large texts, or organize ideas—using it to generate entire assignments introduces serious risks. These risks extend beyond receiving a poor grade. Students who rely on AI-generated academic content may jeopardize their academic reputation, face disciplinary consequences, and ultimately undermine their intellectual development.

This guide explores the evolving dangers of AI dependency in education and explains why producing original work remains the safest and most responsible approach for students in 2026.

II. The Technical Risks: Detection and False Positives

One of the biggest misconceptions among students is the belief that AI-generated content is impossible to detect. In the early days of generative AI, detection systems were inconsistent and often unreliable. But by 2026, the situation had changed dramatically. Educational institutions and technology companies are engaged in what many experts call an “AI arms race.” As generative tools become more sophisticated, detection systems evolve just as quickly.

Modern AI detection software uses advanced techniques such as linguistic fingerprinting, probabilistic pattern analysis, and watermarking technologies embedded within certain AI-generated outputs. These systems analyze sentence structure, word distribution, and statistical patterns that are commonly produced by language models. When a piece of writing displays these patterns at scale, detection software can flag it as likely AI-generated.

Additionally, many institutions now combine automated detection tools with human review. Instructors who suspect AI usage can compare the flagged assignment with a student’s previous writing samples, discussion posts, or in-class essays. If the writing style differs significantly from the student’s known work, the submission may trigger an academic integrity investigation.

Another technical danger lies in what researchers refer to as AI “hallucinations.” Despite improvements in large language models, AI systems still generate incorrect information with surprising confidence. These hallucinations can appear as fabricated statistics, nonexistent research studies, or completely invented citations. A student submitting AI-generated work may unknowingly include references to academic papers that do not exist or misinterpret historical facts, legal precedents, or scientific findings.

For example, an AI tool might cite a journal article with realistic formatting—complete with author names, publication years, and page numbers—but the article may never have been published. If an instructor attempts to verify the reference, the inconsistency becomes immediately obvious. In academic writing, where credibility depends heavily on accurate citations, such errors can quickly undermine the entire assignment.

Bias is another overlooked technical issue. AI systems are trained on massive datasets drawn from the internet, books, and publicly available texts. These datasets inevitably contain social, cultural, and ideological biases. As a result, AI-generated content can sometimes reflect assumptions or perspectives that the student never intended to express. A seemingly neutral assignment could include subtle biases related to gender, culture, politics, or socioeconomic status.

In an academic environment that increasingly emphasizes critical thinking and ethical awareness, presenting biased or problematic content—even unintentionally—can negatively affect how instructors evaluate a student’s analytical abilities. Ultimately, relying on AI means relinquishing control over the intellectual tone and integrity of one’s own work.

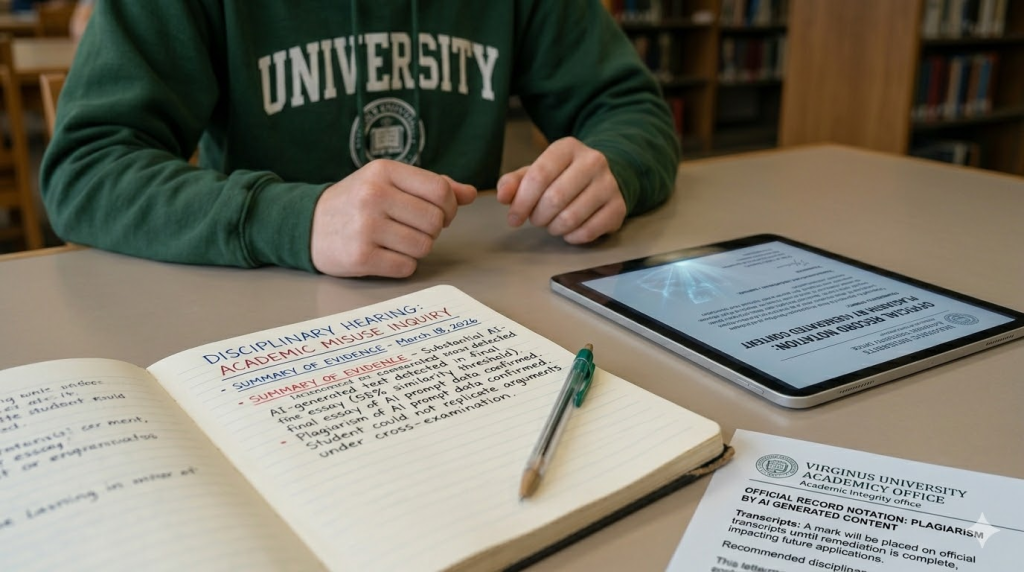

III. The Academic & Professional Consequences

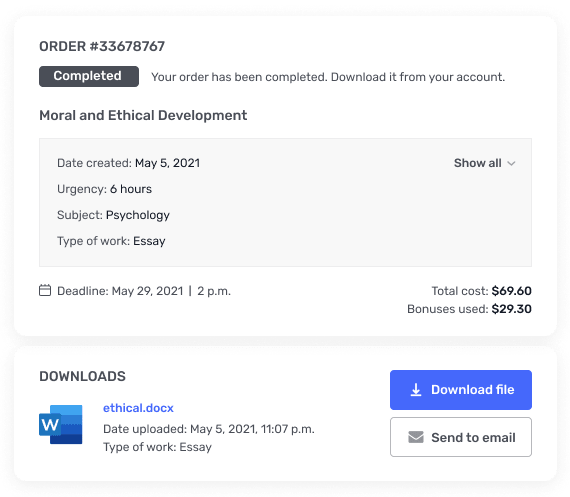

Beyond technical risks, the academic consequences of submitting AI-generated work are becoming more severe each year. Universities around the world have revised their academic integrity policies to address generative AI explicitly. In many institutions, submitting AI-generated text without proper authorization is now categorized as plagiarism or academic misconduct.

Some universities have adopted strict “zero-tolerance” policies for AI-generated submissions. Under these policies, students found to have submitted AI-written work may face immediate penalties, including failing the assignment, failing the entire course, or being placed on academic probation. In more serious cases, repeated violations can lead to suspension or expulsion.

Other institutions have implemented mandatory reporting systems. Instructors who detect AI misuse are required to file formal reports with academic integrity offices. Once a report is submitted, the incident becomes part of the student’s institutional record and may trigger a disciplinary review process.

One of the most serious consequences students rarely consider is the possibility of permanent notations on their academic transcript. Certain institutions record academic misconduct violations directly on official transcripts or internal academic files. These records may be reviewed when a student applies for graduate school, professional certifications, or competitive internships.

Graduate admissions committees often evaluate more than just grades. They also examine academic conduct and ethical standing. A record of academic dishonesty—even if it occurred early in a student’s academic career—can significantly reduce admission chances at reputable programs.

The long-term professional implications can also be substantial. Many employers place a strong emphasis on integrity, originality, and problem-solving ability. If a graduate has spent years relying on AI to generate assignments, they may struggle when required to produce original reports, research summaries, or analytical writing in a professional environment.

Perhaps the most significant consequence of AI dependency is the loss of intellectual development. Writing assignments are not simply exercises in producing text—they are structured opportunities for students to develop critical thinking, analytical reasoning, and communication skills. When students bypass this process by outsourcing their thinking to AI, they lose the chance to refine these essential abilities.

Furthermore, developing a unique academic voice takes time and consistent practice. Each essay, research paper, or discussion response contributes to a student’s evolving intellectual identity. Overreliance on AI-generated writing can interrupt this development, leaving students with limited confidence in their own analytical skills.

In today’s competitive job market, originality and clarity of thought are valuable professional assets. Employers consistently seek candidates who can communicate ideas effectively, evaluate complex problems, and present well-reasoned arguments. Students who depend heavily on AI tools may find themselves at a disadvantage compared to peers who developed these skills through genuine academic engagement.

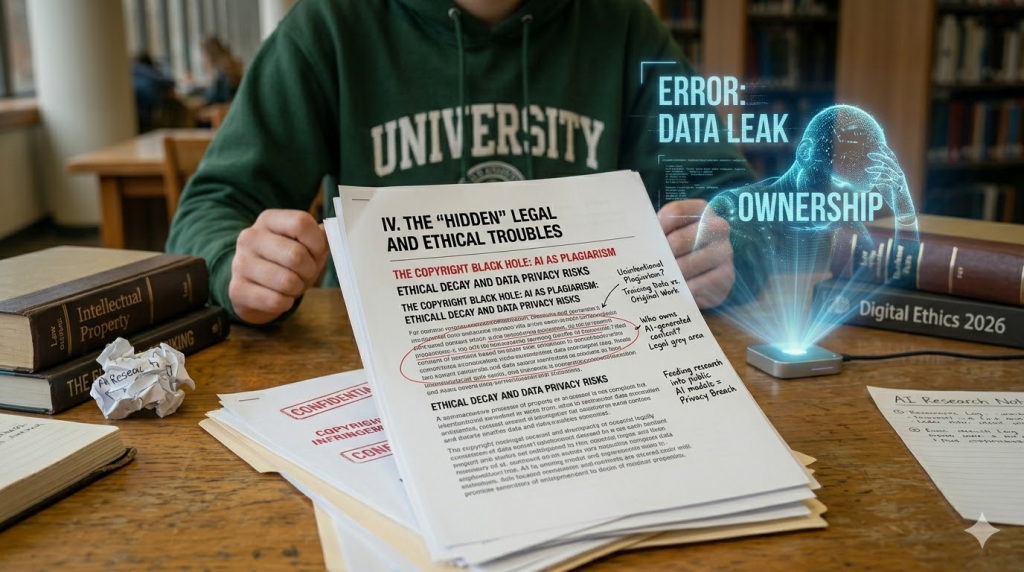

IV. The “Hidden” Legal and Ethical Troubles

Beyond academic penalties and detection technologies, AI-generated academic content introduces deeper legal and ethical complications that many students rarely consider. These issues are not always visible at first glance, but they can create serious problems related to intellectual property, privacy, and academic integrity.

Copyright and Ownership

One of the biggest unresolved questions surrounding generative AI in 2026 involves the ownership of AI-generated text. When a student uses an AI system to produce an essay, it is not always clear who legally owns the content. Some AI platforms claim limited rights over generated outputs, while others operate under terms that allow them to reuse user prompts to further train their systems.

At the same time, AI models are trained on vast collections of books, journal articles, websites, and other written materials. Because of this training method, AI-generated responses may unintentionally replicate phrases, arguments, or structures that resemble existing copyrighted works. Even if the student did not intentionally copy another author, the resulting text could still resemble published material closely enough to trigger plagiarism detection systems.

This situation creates what scholars sometimes describe as “unintentional plagiarism.” The student did not copy a source directly, yet the generated content may echo existing material without proper attribution. In academic environments where originality and proper citation are essential, such cases can still lead to accusations of misconduct.

Until legal frameworks fully address AI-generated authorship, students who rely on AI to produce academic work may unknowingly enter a legal gray area involving intellectual property and copyright compliance.

Privacy Concerns

Another often-overlooked risk involves the privacy of the information students submit to AI systems. Many AI tools operate on cloud-based platforms that store and analyze user prompts to improve future model performance. When students paste research notes, draft ideas, or assignment instructions into these tools, that information may be processed, logged, or retained by the service provider.

This becomes especially problematic when students input sensitive material such as unpublished research, confidential case studies, or personal reflections required for coursework. Once this information enters a public AI model, the student may lose full control over how it is stored or used.

In research-intensive fields, this risk becomes even more significant. Students working on dissertations, thesis projects, or collaborative research may inadvertently expose proprietary data or preliminary findings through AI prompts. If such information later appears in other generated responses or becomes accessible through training data, it could compromise the originality or confidentiality of the research.

Therefore, students must recognize that using AI tools is not only an academic decision but also a data privacy decision.

Academic Dishonesty vs. Ethical Decay

There is also a deeper ethical question surrounding AI use in education. Many educators distinguish between using AI as a “calculator for words” and using it as an “engine for thoughts.”

Using AI as a calculator might involve asking it to explain a difficult concept, summarize a complex reading, or suggest potential research directions. In this role, the AI acts as a support tool that enhances understanding without replacing the student’s intellectual effort.

However, when students ask AI to produce entire essays, discussion posts, or research arguments, the tool becomes an engine that generates the thinking itself. At that point, the student is no longer engaging in the core learning process that assignments are designed to encourage.

This shift raises important ethical concerns. Education is not simply about producing written documents—it is about cultivating reasoning, analysis, and intellectual independence. When students consistently rely on AI to generate their thoughts, the boundary between assistance and academic dishonesty becomes increasingly blurred.

Over time, this reliance can lead to what some educators describe as “ethical decay,” where the habit of outsourcing intellectual effort gradually weakens a student’s sense of academic responsibility.

V. Why Human Writing Wins (The Value of the Struggle)

Although AI-generated writing may appear efficient, the process of writing independently offers cognitive and intellectual benefits that AI cannot replicate. The effort involved in constructing an argument, organizing ideas, and articulating thoughts is precisely what helps students grow academically.

Critical Thinking Development

Writing is fundamentally a thinking process. When students draft an essay, they are forced to analyze sources, evaluate evidence, and determine how ideas connect. Each sentence requires decisions about clarity, logic, and relevance.

This process develops critical thinking skills that extend far beyond the classroom. By engaging directly with complex ideas, students learn how to question assumptions, compare perspectives, and defend their own viewpoints with evidence.

When AI generates the writing instead, this cognitive process is largely bypassed. The assignment may still appear complete, but the student misses the intellectual exercise that transforms information into knowledge.

Emotional Intelligence (EQ)

High-quality academic writing often includes subtle forms of emotional intelligence. Personal insight, cultural awareness, and lived experiences allow students to interpret information in meaningful ways. These elements help essays move beyond simple summaries toward thoughtful analysis.

AI systems, however, do not possess lived experiences. They generate text by identifying patterns in language rather than by reflecting on genuine human perspectives. As a result, AI-generated writing often feels generic, detached, or overly mechanical.

Professors frequently look for authentic engagement with a topic. They want to see how students interpret ideas, challenge assumptions, and connect theory with real-world observations. Essays that demonstrate empathy, reflection, and intellectual curiosity often stand out because they reveal the student behind the writing.

These qualities remain uniquely human and cannot be convincingly replicated by automated systems.

Memory Retention

Another major advantage of manual writing involves knowledge retention. Educational research consistently shows that students remember information more effectively when they actively process and synthesize it themselves.

When students read sources, organize notes, and convert those ideas into their own words, they engage multiple cognitive pathways that reinforce memory formation. This process strengthens long-term understanding of the subject matter.

In contrast, relying on generative shortcuts reduces this engagement. If AI produces the majority of the text, the student may only skim the output rather than deeply analyze the material. As a result, the information is less likely to be retained after the assignment is submitted.

In practical terms, this means that students who rely heavily on AI may struggle during exams, class discussions, or future courses that build on earlier material.

VI. The “Safe” Way to Use AI in 2026

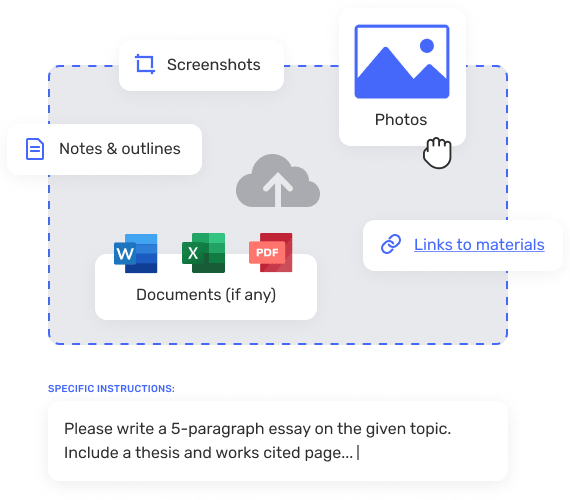

Despite the risks associated with AI-generated assignments, artificial intelligence can still serve as a useful academic tool when used responsibly. The key is understanding how to integrate AI in ways that support learning rather than replace it.

AI as a Librarian, Not a Ghostwriter

One of the safest ways to use AI in academic work is to treat it like a digital research assistant rather than a writer. For example, AI can help brainstorm potential essay topics, explain complex theories in simpler terms, or suggest possible research directions.

Students may also use AI to organize study schedules, generate practice questions, or summarize lengthy readings to gain an initial understanding of a topic. In these roles, AI functions as a supportive tool that enhances comprehension without replacing the student’s intellectual contribution.

However, drafting entire essays or assignments should remain the student’s responsibility.

Verification Strategies

Whenever AI is used for research support, verification becomes essential. Students should independently confirm every fact, statistic, and citation provided by the AI system.

This process typically involves locating the original sources in academic journals, textbooks, or reputable databases. If an AI-generated citation cannot be verified through credible sources, it should not be included in the assignment.

Students should also compare multiple sources to ensure that the information presented is accurate and contextually appropriate. By maintaining strict verification practices, students can avoid the risk of including fabricated or misleading information in their work.

Transparency

Transparency is another important element of responsible AI use. Many universities now encourage or require students to disclose when AI tools have assisted with certain aspects of their work.

This disclosure can take the form of a brief statement explaining how AI was used—for example, whether it helped brainstorm ideas or summarize background information. Informing instructors about AI assistance maintains trust and demonstrates academic honesty.

More importantly, transparency reinforces the principle that AI should support learning rather than replace it.

VII. Conclusion

The rapid expansion of artificial intelligence has transformed how students access information and complete academic tasks. While AI offers powerful tools for research and learning support, using it to generate academic content introduces significant risks.

Detection technologies have advanced, making AI-generated submissions increasingly easy to identify. AI systems can also produce inaccurate information, fabricated citations, and biased content that damages a student’s credibility. In addition, universities now enforce stricter academic integrity policies that treat unauthorized AI use as a serious violation.

Beyond institutional penalties, students face deeper legal, ethical, and intellectual consequences. Issues related to copyright, data privacy, and authorship create legal uncertainties, while heavy reliance on AI weakens critical thinking, personal expression, and long-term knowledge retention.

Ultimately, the purpose of education is not simply to complete assignments but to build the skills necessary for lifelong learning and professional success. Writing assignments serve as training grounds for analytical thinking, communication, and intellectual independence.

Students who commit to producing original work gain far more than a grade—they develop confidence in their own ideas and the ability to articulate them effectively.

In a world increasingly shaped by artificial intelligence, authentic human thinking remains one of the most valuable skills a student can cultivate. Taking pride in original writing, embracing the effort required to refine ideas, and engaging deeply with learning materials will always provide greater long-term rewards than any automated shortcut.

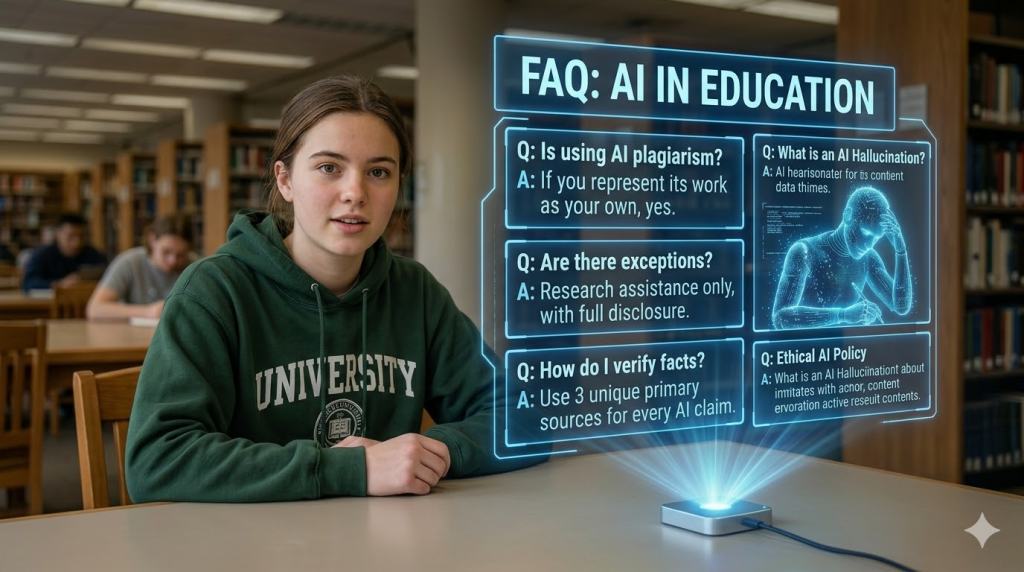

Frequently Asked Questions (FAQ)

1. Is using AI for school assignments illegal in 2026?

Using AI itself is not illegal, but how it is used matters greatly. Most universities and schools now treat submitting AI-generated content as a violation of academic integrity if the work is presented as the student’s own writing. In many institutions, this can fall under plagiarism or academic misconduct policies. Students are usually allowed to use AI for research support or idea generation, but generating entire essays or assignments without disclosure can lead to disciplinary action.

2. Can professors really detect AI-generated essays?

Yes, detection technology has improved significantly. Universities often use advanced AI detection tools that analyze writing patterns, sentence structures, and probability distributions associated with machine-generated text. In addition, professors may compare assignments with a student’s previous writing style. Sudden changes in tone, vocabulary, or structure can trigger further investigation.

3. What happens if a student is caught submitting AI-generated work?

Consequences vary by institution but can be serious. Common penalties include failing the assignment, failing the course, academic probation, or disciplinary records within the university. In more severe cases or repeated violations, students may face suspension or expulsion. Some institutions also record academic misconduct on official transcripts.

4. Why is AI-generated content considered academic dishonesty?

Academic assignments are designed to evaluate a student’s understanding, reasoning, and writing ability. When AI produces the content, the student is no longer demonstrating their own knowledge or analytical skills. Submitting AI-generated work without permission misrepresents authorship and therefore violates academic integrity principles.

5. What are AI “hallucinations” and why are they dangerous in academic writing?

AI hallucinations occur when an AI system generates information that appears accurate but is actually false. This may include invented statistics, fabricated citations, or references to studies that do not exist. If students include such information in their assignments, it can damage their credibility and lead instructors to question the validity of the entire paper.

6. Can AI-generated writing include plagiarism even if the student did not copy anything?

Yes. Because AI models are trained on massive datasets of existing texts, they may sometimes reproduce phrases, ideas, or structures that closely resemble published material. Even if the student did not intentionally copy another source, the similarity could still trigger plagiarism detection systems.

7. Is it safe to paste my research notes or assignment prompts into AI tools?

Students should be cautious when sharing information with AI platforms. Many AI tools store and analyze user inputs to improve their systems. This means that research notes, personal reflections, or unpublished academic work could be processed and retained by the service provider. For sensitive research or proprietary data, using public AI tools may present privacy risks.

8. How can students safely use AI without violating academic rules?

The safest approach is to treat AI as a learning assistant rather than a writer. Students can use AI to explain difficult concepts, brainstorm possible research questions, organize study schedules, or summarize background information. However, the actual writing, analysis, and argument development should be done by the student.

9. Why is writing assignments important if AI can generate essays quickly?

Writing assignments help students develop critical thinking, communication skills, and analytical reasoning. The process of organizing ideas and expressing them clearly strengthens understanding of the subject. When AI performs this work instead, students miss the opportunity to build these essential academic and professional skills.

10. Will relying on AI affect my future career?

It can. Employers value individuals who can analyze information, communicate clearly, and produce original ideas. Students who rely heavily on AI during their education may struggle when asked to complete reports, analyses, or presentations independently in professional settings.

11. Should students disclose when they use AI for academic work?

Yes, whenever possible. Many institutions now encourage transparency regarding AI assistance. If AI tools were used for brainstorming, summarizing research, or organizing ideas, informing the instructor can help maintain trust and ensure compliance with academic policies.

12. What is the best strategy for students who want to succeed academically in the AI era?

The most effective strategy is to combine responsible AI use with strong independent thinking. Students should focus on developing their own analytical voice, verifying information carefully, and producing original work. AI can assist learning, but genuine understanding and intellectual growth come from active engagement with the material.